Given the above, the brand, description and price of all products on of the search results can be stored in a pandas dataframe as follows. soup_sephora.findAll(class_ = "product-price") The code below then returns the prices for all products on in a Python list. In the instance of the YSL foundation priced at $95 as shown in the first image below, this is embedded in the soup_sephora object as a text string under the “product-price” class as shown in the second image below, which can be located by searching for “$95” in a Microsoft Word document. By way of example, the price attribute of each product on is stored in the “product-price” class in the HTML structure, which can be identified by doing a “CTRL F” on the price of a given product. I would recommend pasting the HTML codes (stored in the soup_sephora object) in a searchable document type such as Microsoft Word or Notepad, as it helps identify the attributes we need to query to return the data of interest. # url of page 1 of a search for "foundation" on the Sephora website url = " " # The option below prevents Chrome from physically opening options = webdriver.ChromeOptions() options.add_argument('-headless') # Download and specify the location of the chromedriver driver = webdriver.Chrome( executable_path = r'C:\Users\Jin\Documents\Webscraping\Drivers\chromedriver.exe', chrome_options = options ) driver.get(url) # Return the HTML codes soup_sephora = BeautifulSoup(driver.page_source, 'lxml') To scrape for brand, description and price of the cosmetic products respectively, we start by parsing the HTML codes of the website to a Python object named soup_sephora as follows. We’ll then attempt to use Google Chrome to scrape the data of interest on ‘’ of the search, then build onto this scrape with a For-Loop over all other pages. from bs4 import BeautifulSoup from selenium import webdriver import pandas as pd # for storing data in a dataframe

We’ll start by importing the Python libraries as follows. To demonstrate this use case, we’ll scrape from the Sephora website data in relation to a search for “foundation” (don’t judge). Use Case 1: Web Scraper for Paginated Search performing certain actions in the Google Chrome or Firefox browser). In particular, the BeautifulSoup library provides the functionality to search for, query and return data from the HTML codes behind a website, whereas the Selenium library supports browser automation (e.g. The web scrapers for the purpose of this article were built in Python using primarily the BeautifulSoup and Selenium libraries. This means that contents visible to a user on the ‘front-end’ of a website are stored in and can be downloaded by querying the relevant layers of the HTML codes. These codes are typically compiled with some general rules that all websites follow. Most of the contents user encounters on a website is actually the output of some HTML codes. Downloading images hosted on websites (“Image Scrape”)Ī Non-technical Introduction to Web Scraping.

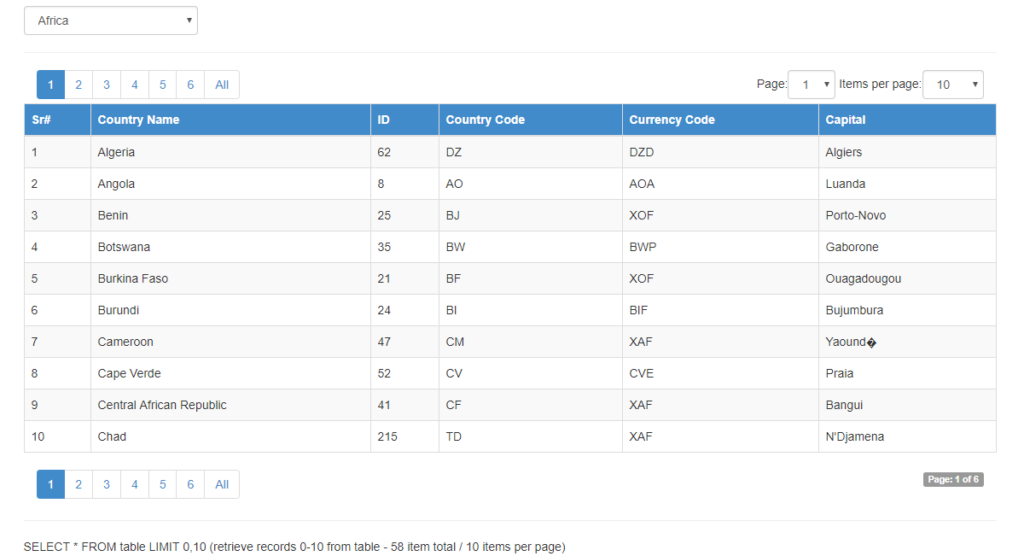

Searching websites which return more search results with a manual down-scroll (“Infinite Scroll”).Searching websites which return search results across multiple pages (“Paginated Search”).This article provides a practical step-by-step guide of such web scraper for the following three (3) common use cases: tabular) data with the use of a web scraper.

I then explored a case for potentially automating the exercise of manually converting contents on a website into structured (e.g. I expressed my frustration about the manual nature of this to my partner, who expressed similar frustration about an e-commerce website. I was browsing the internet for a new laptop recently on a local retailer’s website, and finding myself having to manually note down the brand, spec and price for multiple laptops (for comparison purpose) whilst going through multiple pages of search results. Photo by charlesdeluvio on Unsplash Motivation

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed